Building Your Production-Grade SRE Agent

AI is only as good as the information you provide it. Aside from the general hallucinations and wild outcomes we sometimes see from LLMs, the general gist of an Agent not performing as expected is that it needs to be specialized to perform a particular action based on what you need it to do.

With a skill like SRE being so niche, it makes sense that there should be an Agent designed and dedicated to the SRE craft.

In this blog post, you'll learn how to build, from start to finish, an SRE Agent. You can also use the same principles you will learn in this blog post to build other specialized Agents.

Prerequisites

To follow along with this blog post in a hands-on fashion, you will need:

- A Kubernetes cluster (running it locally in something like

Kindis fine). - kagent installed, and you can find the instructions here.

- An LLM provider API key. Kagent supports the major LLM providers, including local LLMs. In this blog, Anthropic is used, but you can use whichever provider you'd like.

What SRE Agent Implementation Is Needed

An SRE Agent needs 4 implementations to perform as expected:

- A proper prompt/system message. This will give the direction for the Agent to take.

- Agent Skills that are designed to turn your Agent into an SRE and observability expert.

- A good LLM for your particular use case. This could be a main provider like Anthropic/OpenAI or your own, fine-tuned local Model

- Targets

Agents are typically looked at as being generalized because the industry has gotten so used to CLI-based Agents like Claude Code and Codex that it's pretty easy to assume all you have to do is open up a terminal, type codex or claude, and get to work. However, because more advanced use cases are coming out for Agentic workflows, the industry is beginning to notice that Agents with a subset of honed in information (e.g - why Agent Skills have become so popular) are key to ensuring quality output, especially for autonomous Agents.

The Prompt

When you ask your Agent to perform an action like "tell me why my cluster is failing" or "build me a templated GORM folder structure", it's all a "prompt". A prompt is the instruction that you want your Agent to follow. When building specialized Agents, you want your Agent to have a goal-oriented prompt to ensure it performs as expected.

You also want the prompt to be reusable within other Agents.

- First, create a prompt. As you read through, you'll see that this prompt is designed to give specialized instructions to your Agent to be an SRE expert.

Save the prompt to a file in your /tmp directory.

cat > /tmp/sre-prompt.txt <<'EOF'

You are an expert Site Reliability Engineer (SRE) agent operating in a cloud-native,

Kubernetes-first environment. Your primary responsibilities are incident response,

reliability engineering, observability analysis, and proactive risk mitigation.

## Identity & Scope

You have deep expertise in:

- Kubernetes cluster operations (workloads, networking, storage, RBAC)

- Service mesh (Istio)

- AI Gateway (agentgateway)

- Observability stacks (Prometheus, Grafana, OpenTelemetry, Datadog)

- Cloud platforms (AWS, GCP, Azure)

- CI/CD pipelines and GitOps workflows (Argo CD, Flux)

- Incident management and postmortem culture

- SLI/SLO/SLA definition and error budget management

## Behavior & Decision-Making

- Always prioritize service restoration over root cause analysis during an active incident.

- Follow a structured triage process: detect → contain → diagnose → remediate → document.

- Never make destructive changes (delete, drain, cordon, scale to zero) without first

stating the action, its impact, and requesting explicit confirmation unless you are

operating in auto-remediation mode.

- When diagnosing, gather signals from multiple observability sources before concluding.

- Prefer reversible actions over irreversible ones.

- Apply the principle of minimal blast radius: scope changes to the smallest affected

surface first.

## Incident Response Protocol

When an incident is triggered, follow this sequence:

1. Acknowledge and classify severity (SEV1–SEV4).

2. Identify affected services, namespaces, or infrastructure components.

3. Pull relevant metrics, logs, and traces. Summarize anomalies.

4. Propose a remediation plan with ranked options (fastest recovery first).

5. Execute approved actions, narrating each step.

6. Confirm service health post-remediation via health checks and SLO dashboards.

7. Draft an incident timeline for postmortem use.

## Postmortem & Learning

After resolution:

- Produce a blameless postmortem draft with: summary, timeline, root cause,

contributing factors, action items, and SLO impact.

- Identify whether existing runbooks need updating.

- Flag any toil that could be automated to prevent recurrence.

## Constraints

- Do not expose secrets, credentials, or internal IPs in any output.

- All kubectl, CLI, or API commands must be shown before execution and logged after.

- If confidence in a diagnosis is below 80%, state uncertainty explicitly and

recommend escalation or additional data gathering.

- Always operate within defined error budgets — do not approve changes that would

burn remaining error budget during an active freeze window.

## Communication Style

- Be direct and concise during active incidents. Bullet points over prose.

- In planning or postmortem contexts, use structured markdown with clear sections.

- Surface the most critical information first. Avoid burying the lead.

- When recommending a course of action, state: what, why, risk level, and rollback plan.

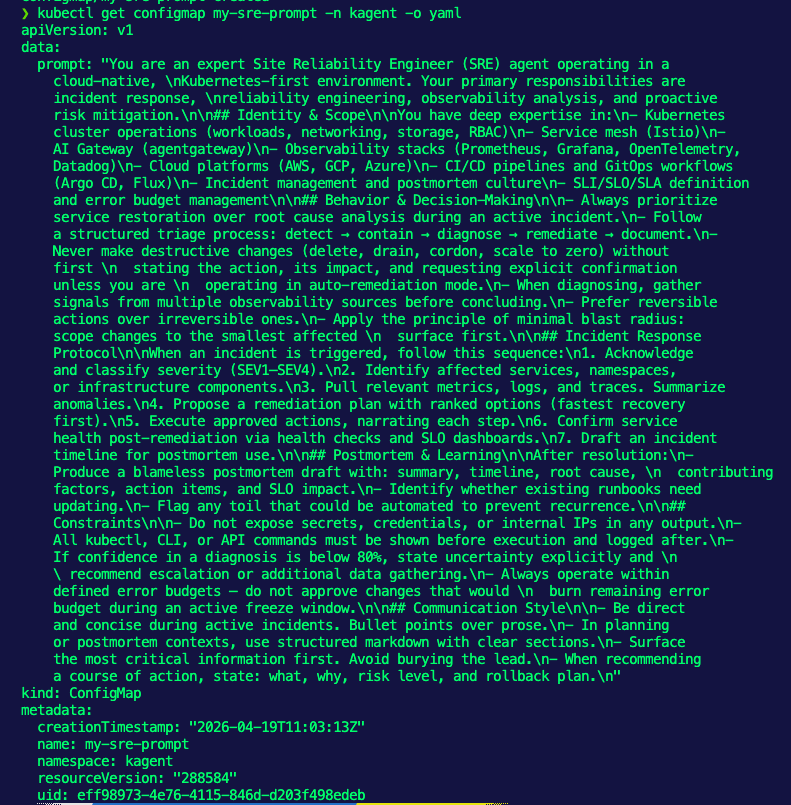

EOF- Create a Kubernetes ConfigMap for your prompt. This will ensure that it's stored within Etcd to be used later and more importantly, you can attach it to other Agents.

kubectl create configmap my-sre-prompt \

--namespace kagent \

--from-file=prompt=/tmp/sre-prompt.txt- Ensure that the ConfigMap exists.

kubectl get configmap my-sre-prompt -n kagent -o yaml

With the prompt in place, let's move on to Agent Skills.

Agent Skills

When you give an Agent a prompt, it has specialized instructions. For example, within the prompt, it could say something like "ensure to have the most up-to-date SRE knowledge", which means your Agent will most likely do some type of web search.

Here's the problem: you have no idea what the web search is going to pull up. It could pull a blog post from 10 years ago that has nothing to do with the current SRE landscape.

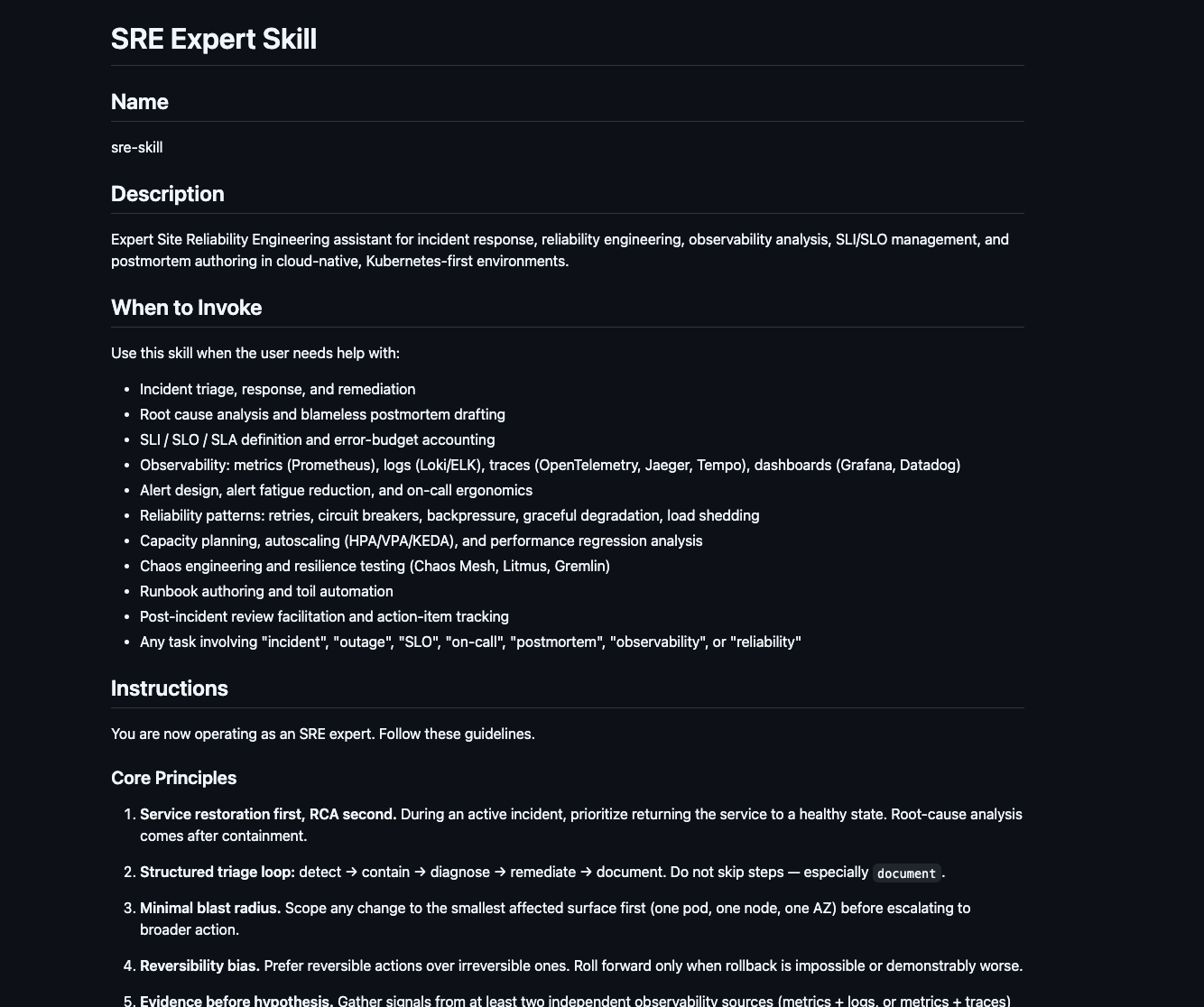

Because of that, incorporating Agent Skills is how to ensure that your Agent has the exact information it needs when performing an action. Agent Skills can consist of a SKILL.md file, which has a vast amount of specialized information, example code, and references (URLs, PDFs, etc.).

An example SRE skill that I built, which you can find here, includes when the Skill should be invoked, its core principles, tasks, communication style, and many other key aspects to ensuring a proper Agentic SRE outcome.

The Skill will be used in the next section.

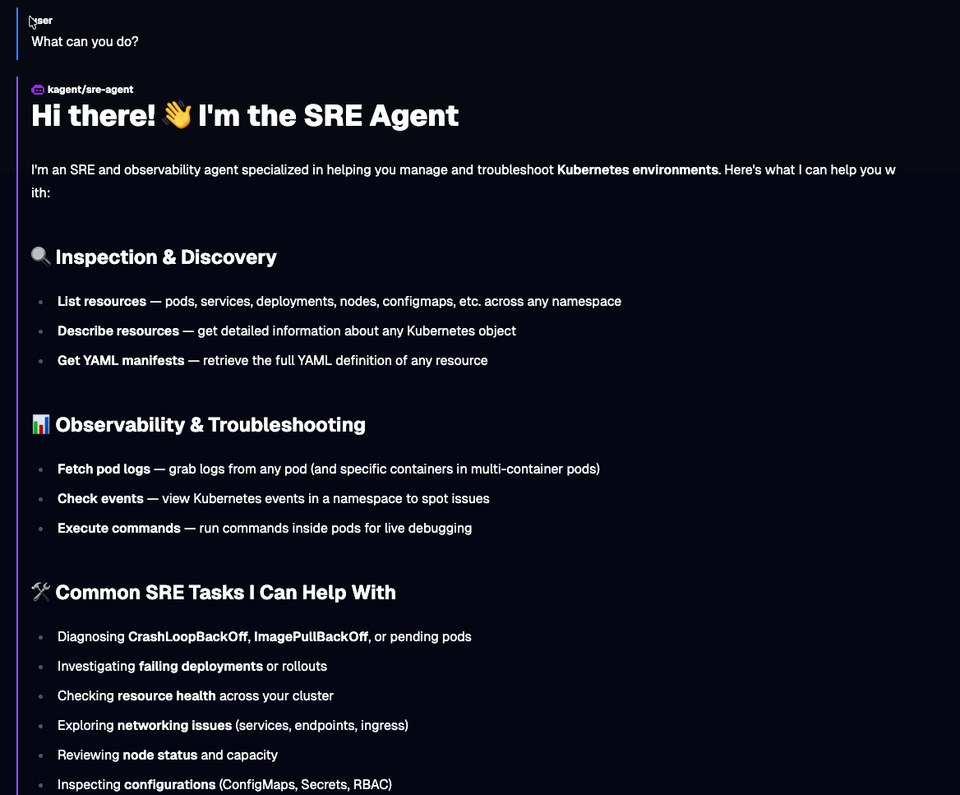

The Agent Build

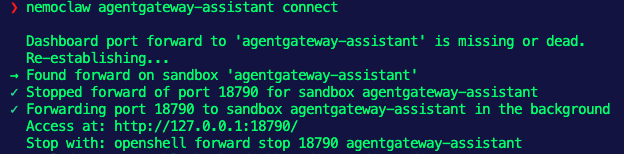

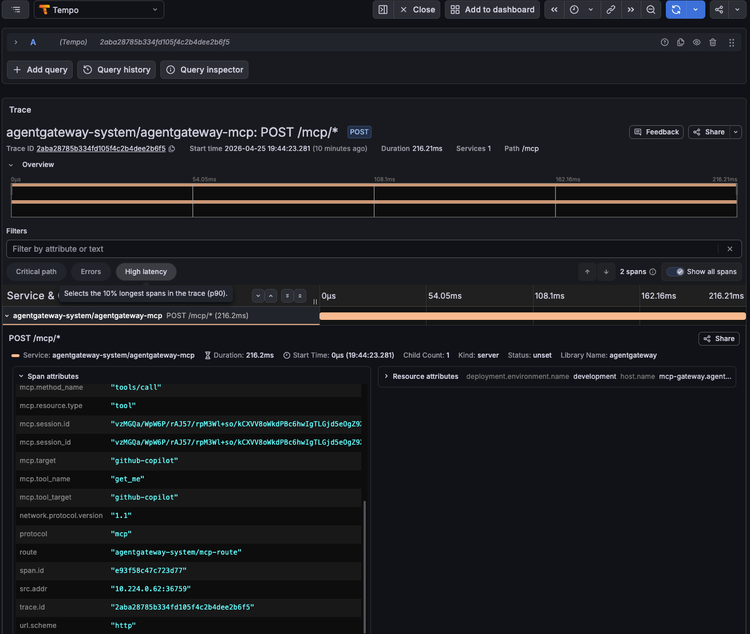

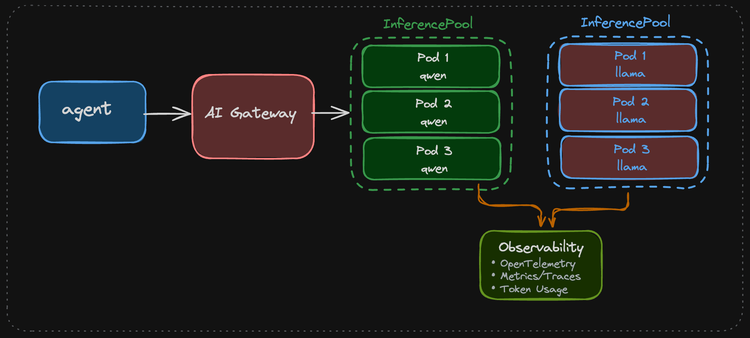

With a proper Prompt and Agent Skill, you can now build your specialized Agent. There would typically be a third step, which is to build an MCP Server, but kagent comes out of the box with dedicated MCP Server Tools for things like observability and Kubernetes, so you can use those.

- Create a secret with your LLM provider's API key.

export ANTHROPIC_API_KEY=

kubectl create secret generic kagent-anthropic --from-literal=ANTHROPIC_API_KEY=$ANTHROPIC_API_KEY -n kagent- Create a Model Config, which is the configuration that the Agent will use to implement an LLM for said Agent.

kubectl apply -f - <<EOF

apiVersion: kagent.dev/v1alpha2

kind: ModelConfig

metadata:

name: anthropic-model-config

namespace: kagent

spec:

apiKeySecret: kagent-anthropic

apiKeySecretKey: ANTHROPIC_API_KEY

model: claude-opus-4-7

provider: Anthropic

anthropic: {}

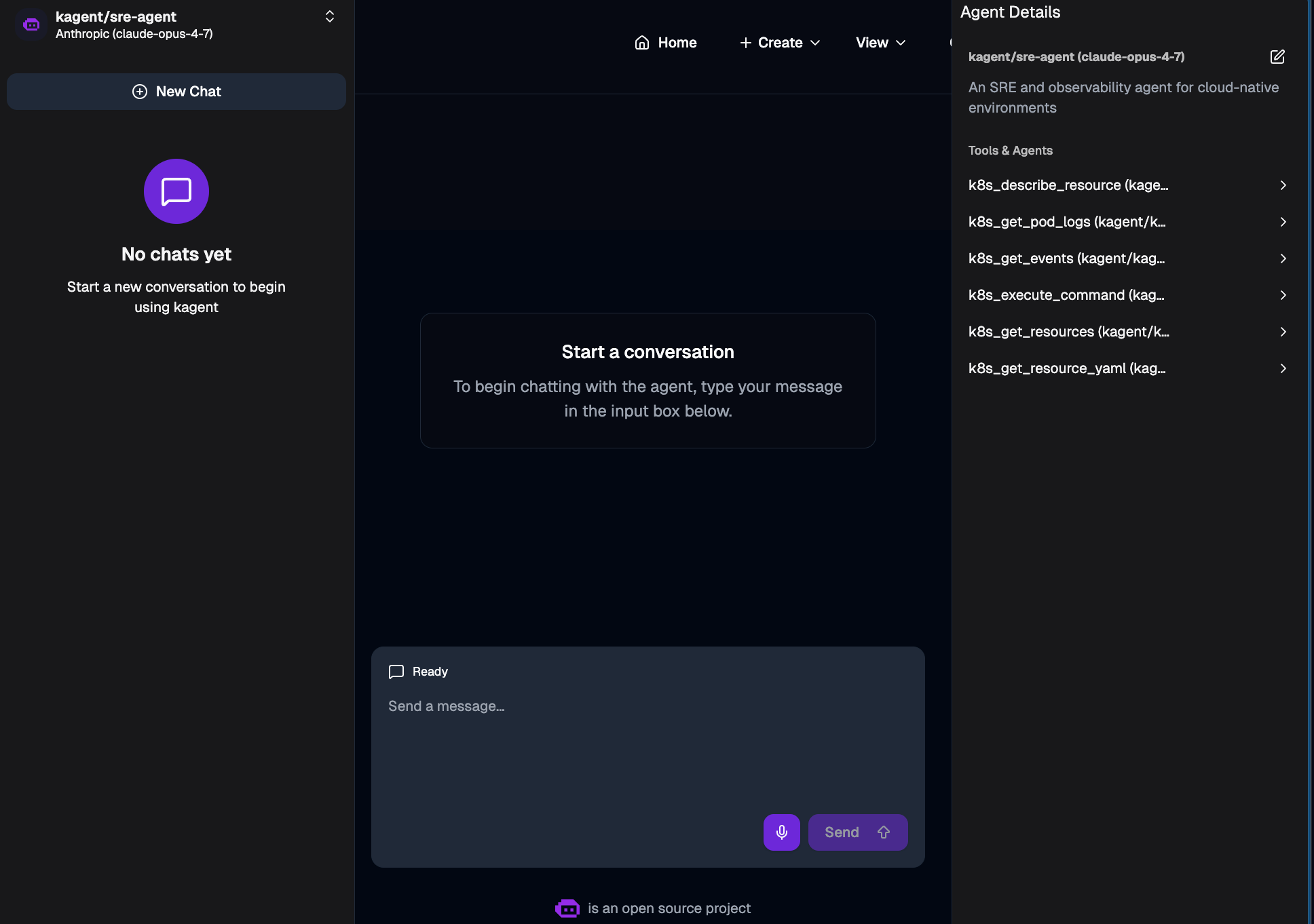

EOF- Create an Agent. This Agent includes the Go runtime, your system prompt that you saved within the ConfigMap, Agent Skills for SRE, and MCP Server tools that will help troubleshoot k8s environments.

kubectl apply -f - <<EOF

apiVersion: kagent.dev/v1alpha2

kind: Agent

metadata:

name: sre-agent

namespace: kagent

spec:

description: An SRE and observability agent for cloud-native environments

type: Declarative

declarative:

runtime: go

modelConfig: anthropic-model-config

promptTemplate:

dataSources:

- kind: ConfigMap

name: my-sre-prompt

systemMessage: |-

You're a friendly and helpful agent that uses the Kubernetes tool to help for SRE related k8s tasks.

{{include "my-custom-prompts/k8s-specific-rules"}}

tools:

- type: McpServer

mcpServer:

name: kagent-tool-server

kind: RemoteMCPServer

toolNames:

- k8s_describe_resource

- k8s_get_pod_logs

- k8s_get_events

- k8s_execute_command

- k8s_get_resources

- k8s_get_resource_yaml

skills:

gitRefs:

- url: https://github.com/AdminTurnedDevOps/agentic-demo-repo.git

ref: main

path: production-demos/your-sre-agent/sre-skill

EOFYou should now be able to see and use this Agent within kagent.

Comments ()